Catch the on-demand session with gaming legend John Romero and see how we’re redefining software quality at AI speed.

Integrate monitoring, observability, and alerting into the core quality engineering process to ensure systems are as diagnosable as they are functional

Feature flags entered our workflow as a quality safeguard.

Implement the Playwright Page Object Model (POM) to build maintainable, resilient test suites by centralizing page interactions and separating them from test logic.

Apply the "5 Whys" technique to both technical debugging and organisational workflows to uncover deep-seated root causes and implement sustainable continuous improvements.

Cultivate professional trust and accountability by using detailed documentation to demonstrate due diligence and quantify risk for stakeholders.

Leverage AI as a personalised "code coach" to bridge the gap between manual testing and automation by translating plain English into executable scripts and providing line-by-line logic explanations.

Prioritise high-impact user journeys and critical risks over exhaustive checklists to ensure essential product functions remain reliable and user-focused through a product-minded testing approach.

Shift from treating AI as a "single source of truth" to using it as a "clarity booster" for human-led ideas.

Modernise your design strategy with mobile-first and keyboard-centric approaches that create more resilient, accessible, and user-friendly software.

Transform your team’s approach to quality from a late-stage gatekeeper process into a proactive, shared responsibility that identifies risks early.

Transition from reactive quality assurance to proactive quality engineering by embedding shared responsibility throughout the entire development lifecycle

Catch the on-demand session with gaming legend John Romero and see how we’re redefining software quality at AI speed.

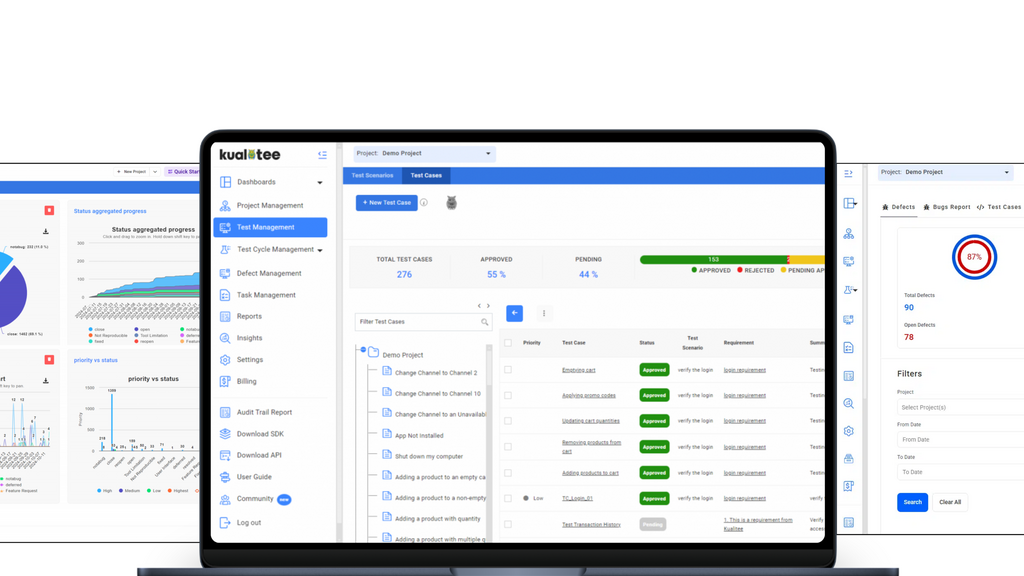

Manage your entire QA lifecycle in one place. Sync Jira, automate scripts, and use AI to accelerate your testing.

Jira Issue Connect brings live, real-time Jira data directly into TestRail, eliminating tab-switching and stale fields.

🚀 Accelerate releases with Test Impact Analysis.