Contents

- Introduction: The Rise of AI in Educational Assessment

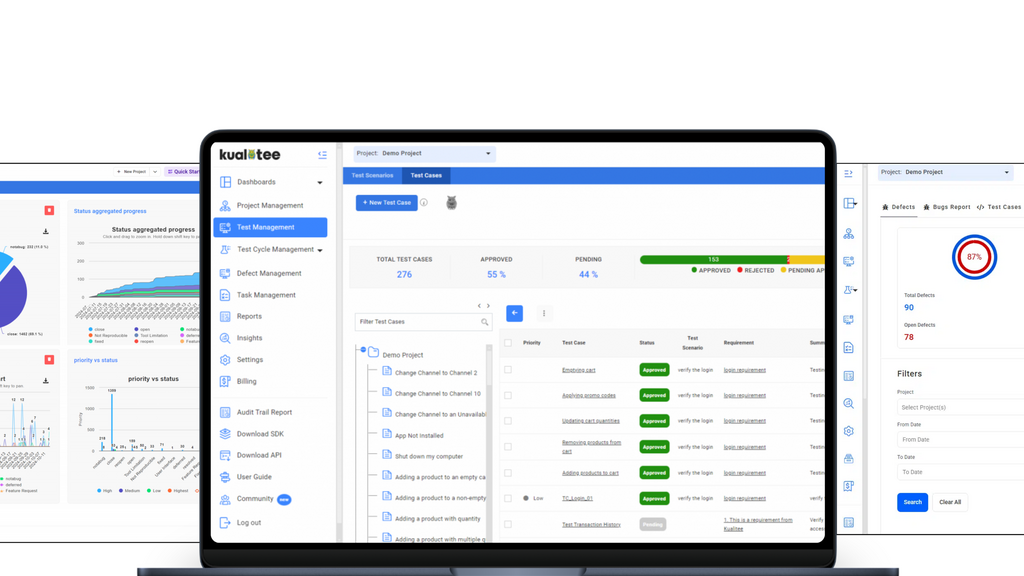

- The New Feature Under Test: AI-Assisted Grading

- Methods: Our Test Design

- Exploratory Tests, Results, And Our Conclusions

- Wrap-up: Improving New Feature Quality With Test Results

I am Paul Maxwell-Walters, QA Lead at Practera. Practera is an educational tech company that provides a programme development platform for universities, schools, and other education providers around the world for assignment and mentor-based learning and assessment.

With the explosion in AI technology, our clients want to be able to use AI to help grade student assignments. Depending on the subject of the course, the material they need to grade can include code assignments, essays, project plans, or written instructions. And of course the student responses vary greatly in quality, word choice, and a host of other attributes.

To meet client demand, we planned to add a feature to our platform allowing the assessment of student assignments by the two versions of ChatGPT available now: GPT-3.5 and GPT-4. At the moment, these are the two most popular generative AI large language models (LLMs). The feature, as planned, would allow comparison of student responses with a specimen “top” answer provided by clients or course administrators. The feature would also invoke ChatGPT to provide feedback and scoring.

Our mission: to test the new feature for functionality, quality, and relevance of ChatGPT’s feedback, not to mention the cost of AI-provided responses and scoring. Based on test results, our team would then evaluate whether or not, for example, to make GPT-3.5, GPT-4, or both versions available within the platform.

What follows here is a “lab report” on the methodology and outcomes of testing our platform’s new AI-assisted student assessment feature. This report isn’t exhaustive; we did more tests across parameters than are accounted for here. But this article should give the reader a flavour of the testing work.

This is not intended to be a primer or guide to generative AI, LLMs, or ChatGPT. For a wealth of further information, you can take a look at this guide from OpenAI.

Practera’s admin and student apps provide learning and assessment programmes, which we call “experiences”. Student assignments in Practera (known as “assessments”) are graded against model multiple choice answers or via review and feedback from programme designers and administrators (“authors” or “coordinators”). Peers or subject matter experts can also act as mentors.

Very simply stated, Practica’s AI-assisted student assessment feature

- Sends a request for feedback and suggested scoring to ChatGPT, and

- Displays the response from ChatGPT

Elements For Requesting Feedback And Suggested Scoring From Chat GPT

ChatGPT in itself cannot be expected to assess answers with the same standards or in the same way as the course providers. So to get meaningful feedback and scoring from ChatGPT, the Practera application submits a request that includes:

- The actual response from the student, in text or file format

- An instruction interpretable by the AI (known as a “prompt”), requesting feedback about the student’s answer. Creating good and effective prompts is a wide field in itself (and more info can be found in this course). To simplify the task of the course designer, the Practera feature requires them to specify:

- The “role” that ChatGPT should take for the purpose of providing the response, known as a “persona”. For an answer from prospective university logicians, that could be “You are an experienced professor who teaches and expects a high standard and depth of knowledge of logic”.

- Context of the problem and expectations of the level of breadth, quality, format and relevance of the student’s answer. For example, for a set of travel directions, this could be “The student's work must be correct, complete, concise, numbered and broken into separate lines for each step of the journey”.

- Parameters regarding the content, type and format of feedback to be given. For example: state the correct answer? Provide positive and negative feedback? Prose or bullet points? First or third person?

- A specimen “best quality” answer (known as an “exemplar”), provided so that ChatGPT can make a comparison with the student’s answer and provide feedback accordingly. For the purposes of testing, we took exemplars from third-party sites such as a university’s past exam portal, Wikipedia, or code answers website.

- A series of multiple choice answers with associated scores (also known as “weights”) that rate one or more qualities of the student answer

- A prompt stating which of the multiple choice options matches the exemplar

For example, Practera’s request to ChatGPT for feedback and suggested scoring on the assignment “AI Prompt & Feedback — Ind Assessment 1 — London Transport” (see the final example) contains the following

Question for student

“List a set of instructions of how one might get from Cutty Sark, Greenwich to Heathrow Airport using the DLR, and any rail lines. Make the instructions short, concise instructions, numbered, one line per step.”

Student response to question (see next section for examples)

AI prompt

“Provide feedback in the first person on the student's directions in the ‘student work’ section as compared to the information in the ‘example’ section, giving constructive praise and criticism of the ‘student work’. The student's work must be correct, complete, concise, numbered and broken into separate lines for each step of the journey. Do not restate the ‘example’ section.”

Exemplar (taken from a route search on the Transport for London site)

1) From Cutty Sark, King William Walk, walk 250 metres to Cutty Sark DLR

2) Take the DLR from Cutty Sark towards Stratford. Get off at Canary Wharf DLR.

3) From Canary Wharf DLR, walk 350 metres to Canary Wharf Elizabeth Line station

4) Then take the Elizabeth Line towards and get off at Paddington Elizabeth Line station

5) Walk 100 metres to Paddington Rail Station, Heathrow Express platform

6) Take the Heathrow Express Service to the required Heathrow terminal station”

Question for ChatGPT

“Which of these choices best describes the completeness and accuracy of the learner's instructions?”

AI prompt

“The example provided demonstrates the fourth choice 5 steps present, totally complete”

Multiple choice answers

- No useful steps

- 1-2 steps present, partially complete

- 3-4 steps present, nearly complete

- 5 steps present, totally complete

Elements Of The ChatGPT Response

ChatGPT returns:

- Feedback on the student’s submission (comparing it to the exemplar), matching the parameters stated in the question and AI prompt

- The multiple choice answer most closely matching the student submission

Evaluating The Usefulness Of ChatGPT’s Student Assessments

We sought to judge the quality of ChatGPT’s feedback and multiple choice answer selection using these criteria:

- Quality — Does ChatGPT sufficiently describe how the student’s response compares to the exemplar, using appropriate language and based on the approach outlined in the AI prompt?

- Relevance — Is the output pertinent to the student’s actual submission? This also overlaps with quality.

- Cost — This depends heavily on the size in tokens of the prompt and exemplar and whether GPT-3.5 (which is older, less advanced but much cheaper per “token” to prompt) gives an acceptable response when compared to the newer but more expensive GPT-4. GPT-4, which has been trained on much larger data sets, would be expected to provide better and more consistent assessment feedback. But the ideal solution from a cost and sustainability perspective may be to use GPT-3.5. This is especially true for student cohorts in the thousands and multiple assessments per programme.

The Critical Variables

For the test in this report we looked at the following parameters that could be changed:

- Model — GPT-3.5 or GPT-4

- Types of questions — directions, informative, coding etc

- Types of Answers — Poor, Irrelevant, Missing Content, Perfect

For these particular tests we kept the AI prompt itself quite detailed and of a consistent quality, concentrating more on what sorts of questions and answers worked.

Approach

Our team ran exploratory testing sessions to evaluate quality. We found specimen questions and answers online, or we created them on our own using external information sources to derive specimen exemplars. We would then answer the assignments as if we were a student. Then we evaluated the AI feedback.

Test Environments

Tests were executed on separate test and staging environments, with dedicated test experiences pre-configured to connect to ChatGPT. Nobody else was using the experiences when the tests were being performed.

Evaluating Response Quality Of GPT-3.5 versus GPT-4

Our tests sought to determine the relative quality, accuracy and relevance of GPT-3.5 versus GPT-4 feedback and scoring of student submissions to contrived questions and exemplars.

Sample student responses were categorized as “poor”, “irrelevant”, “mediocre” and “perfect”. “Perfect” answers are typically rephrased versions of the exemplar.

Question: History Of The University Of Manchester (1824 To 2003)

This assessment required the student to write a two-page summary of the history of the University of Manchester and its sister university UMIST) from 1824 to 2003 (pre-merger). It requires basic summarisation of known facts about the university.

The same AI prompt and exemplar were used in all cases.

Full question to the student:

Write a two page summary of the history, pre-merger, of the University of Manchester, from 1824 to 2003

AI Prompt:

In the third person, evaluate the information in the "student work" section as compared to the information in the "example" section, giving constructive praise and criticism of the "student work". The student work should be clear and concise with names of key people including the founder and years when specific events occurred. The student's work should mention both the University of Manchester and UMIST and no other universities. Do not restate the student's work or the example in your response. Do not provide a greeting or closing in your response.

Full Question To ChatGPT:

Writing Quality — Which of these choices best describes the quality of the writing?

Scored/Weighted Multiple Choice Options

- Great writing — addresses the question and all the requirements (Weight 1)

- Decent writing — addresses most or all of the question and the requirements (Weight 0.75)

- Adequate writing — addresses some of the question & requirements but is unclear. (Weight 0.5)

- Poor writing — doesn't address the question or requirements. (Weight 0.25)

For full text of the exemplar, University of Manchester page, section History, subsection Origins (1824 to 2004), Wikipedia CC 4.0 [link].

History Test Result 1: Poor Quality Student Answer, GPT-4

Here the student simply answers that they don’t know. We deemed this to be a poor quality answer to the assessment question.

Student Answer:

“I know nothing about the University of Manchester sorry”

GPT-4 Student Feedback:

“The student work does not meet the instructions provided for the task. Instead of giving a two-page summary of the history of the University of Manchester pre-merger from 1824 to 2003, the student acknowledged lack of knowledge on the subject.

As a way to improve, the student should first conduct thorough research on the topic. They can use online sources, books or articles to understand the background, establishment, growth and key figures of the University of Manchester. The student's response should cover the key events, including the formation of the Mechanics' Institute in 1824, the establishment of Owens College in 1851, the move to Oxford Road in 1873, the university becoming a constituent college of Victoria University in 1880, and the merging with the University of Manchester Institute of Science and Technology in 2003.

Additionally, the student should consider mentioning the contribution of key figures like John Dalton in the formation of the Mechanics’ Institute, John Owens for his bequest that established Owens College, and Charles Beyer for his contributions and donations towards the college's development. Details such as these will make the summary more comprehensive and informative.

The student should keep in mind the importance of focusing only on the University of Manchester and UMIST as per the instructions, and not diverge to other universities. Effort and dedication to understanding the topic will surely lead to a satisfactory result.”

GPT-4 Selected Answer:

“Poor writing, doesn't address the question or requirements.”

Analysis of Feedback:

The feedback to the student is negative, as expected. It clearly states that the student needs to do proper research and generally adheres to the requirements stated in the AI prompt. It refers to the student in the third person. However, the feedback is far from perfect — GPT-4 has ignored the instruction not to restate parts of the exemplar, since that would give students information that they should research themselves. Similarly, the line about the student focusing on the University of Manchester and UMIST and not discussing other universities, while stated in the AI Prompt, is unnecessary in the feedback.

The selected answer to “Writing Quality” was totally correct.

History Test Result 2: Poor Quality Student Answer, GPT-3.5

Student Answer (As per Scenario 1)

GPT-3.5 Student Feedback:

“The student work provides a clear and concise summary of the history of the University of Manchester from 1824 to 2003, mentioning key individuals and significant events. The work accurately highlights the roots of the University in the Mechanics' Institute and John Owens' contribution to founding Owens College. It mentions the German influence on the university and the subsequent move to new premises in 1873. The student work also acknowledges the relationship between the University of Manchester and UMIST, leading to the merger in 2003.

However, there is room for improvement in the student work. The timeline of events could be more clearly presented to ensure a better flow and understanding of the history. Additionally, the work could benefit from providing more context and explanation of the significance of certain events and individuals. For example, it would be helpful to mention the contributions of notable scientists associated with the university and how they have impacted their respective fields. Finally, the student work could try to incorporate more specific details and dates to enhance the overall precision of the summary.”

GPT-3.5 Selected Answer:

“Poor writing, doesn't address the question or requirements.”

Analysis of Feedback:

Is the above suitable feedback? Very much not. The first paragraph is utterly untrue. GPT-3.5 clearly implies facts in the student submission that are not there, along with restating names, years and events from the exemplar which it should not have done. It states that there is “room for improvement” for a student submission that was of poor quality.

That being said, the selected answer “Poor writing, doesn't address the question or requirements.” is very much correct and is at odds with the review feedback. The student would have received incorrect and unsuitable text feedback but scored correctly anyway.

History Test Result 3: Complete, High Standard Student Answer, GPT-4

Here, the student submits a close-to-perfect answer with respect to the exemplar. This response was created by paraphrasing the text of the exemplar with an AI tool.

Student Answer: [ link ]

GPT-4 Student Feedback:

“The student's work was commendably extensive, demonstrating an impressive depth of understanding. The details regarding key figures, their contributions, and the evolution of the University of Manchester and UMIST were intricately captured. The clarity and accuracy reflect a meticulous attention to detail. Specific events were presented chronologically, aiding in the ease of comprehension.

Still, several suggestions can streamline this summary to align more closely with the example provided. The student's work could benefit from tightening up the language and trimming some details for a more concise summary. For instance, the section on German influence, while informative, could be summarized more effectively, emphasizing the main contributors and their specific achievements rather than focusing on their places of study.

It is also noted that a few significant details mentioned in the example are missing in the student's work. The student did not specify that the federal Victoria University was England's first civic university nor that Owens College was absorbed by the Victoria University of Manchester in 1904. Also, the unique circumstances surrounding UMIST gaining independent university status in 1955 and the legislative change in 1994 was largely glossed over.

While the student mentioned the scientific achievements and Nobel laureates of the universities, more emphasis could be made on them being not only associated with the University, but their discoveries having been made there, much like it is highlighted in the example.

In future, a focus on brevity and incorporation of some ignored yet salient points could greatly enhance the quality of the student's work. A revision mindful of these tips would undoubtedly result in a sharper, more effective summary, more deeply reflecting the quality of work expected at this academic level.”

GPT-4 Selected Answer:

“Great writing, addresses the question and all the requirements”

Analysis of Feedback:

The above is a deservedly glowing and very detailed review of the student’s understanding of the history of the University of Manchester. It also provides areas of improvement — particularly in terms of succinctness about the German influence at the university. There will always be some area of difference from the exemplar due to the use of a paraphrasing tool to develop the specimen answer.

However, the feedback is greatly flawed. It cites as “omissions” items that were clearly stated in the student answer have been omitted — notably that the federal Victoria University of Manchester (its pre-2003 name) was England’s first civic university, or that Owen’s College was incorporated into the new university in 1904.

Why is this? Perhaps, for GPT-4, the student submission used up all the available tokens and everything after was ignored. This deserves further investigation, since it could limit the size of student answers or the suitability of AI grading and feedback for lengthier assignments.

The selected multiple choice answer “Great writing, addresses the question and all the requirements” was appropriate in this case.

History Test Result 4: Complete, High Standard Student Answer, GPT-3.5

Student Answer: (As per Scenario 3)

GPT-3.5 Student Feedback:

“The student work provides a concise and clear summary of the history of the University of Manchester and UMIST, addressing the key events and individuals involved. The information includes the establishment of the Mechanics' Institute in 1824, the founding of Owens College in 1851, the role of Charles Beyer in funding and developing the college, the German influence on the institution, the merger with the Victoria University of Manchester in 1880, the emergence of UMIST as an independent university in 1955, and the subsequent merger of the two institutions in 2003.

Constructive praise

- The student work effectively captures the key moments in the history of the University of Manchester and UMIST, providing a comprehensive overview of their origins, development, and eventual merger.

- The inclusion of key individuals such as John Dalton, John Owens, Charles Beyer, and notable scientists and philosophers associated with the university adds depth and context to the summary.

- The use of specific years, such as the establishment of Owens College in 1851 and the merger in 2003, helps to create a timeline of events.

Constructive criticism

- The student work could benefit from providing a bit more detail about the significance of the merger between the University of Manchester and UMIST. What were the motivations behind the merger? How did it impact the institutions' academic programs and research endeavors?

- While there is mention of the German influence on the university, the student work could provide more specific examples and its overall impact on the institution's development.

Overall, the student work demonstrates a good understanding of the history of the University of Manchester and UMIST, and with a few additions, it could be further improved to provide a more comprehensive and in-depth summary.”

GPT-3.5 Selected Answer:

“Great writing, addresses the question and all the requirements”

Analysis of Feedback:

GPT-3.5 is commonly seen as inferior to GPT-4. But in the evaluation of a “complete”, high quality answer, it gave better feedback. The feedback was very clear and gave almost equal measures to constructive praise and criticism, including advice to improve answers beyond the scope of the original exemplar.

However it disregarded facts in the student responses, just as the GPT-4 feedback did. For example, it asked for more specific examples of German influence when the supplied text provided numerous such examples, including how Sir Henry Roscoe was influenced from his time at Heidelberg to develop Manchester as a research university. It also, like GPT-4, ignored the AI prompt not to restate facts from the exemplar. But in the case of feedback for complete and high quality answers, this error is inconsequential.

The selected multiple choice answer “Great writing, addresses the question and all the requirements” was also correct, as it was for GPT-4.

Our Evaluation Of The History Question Test Results

Testing revealed significant problems (albeit in response to different qualities of student submission) with GPT-3.5 and GPT-4’s feedback on the student answers. GPT-3.5 struggled with the sparse and inadequate student answer whilst GPT-4 handled it well. Both performed better with the “complete” answer but GPT-4 apparently ignored a large part of it and accused the student of leaving it out (which GPT-3 also did to a lesser extent).

In both cases the correct weighted multiple choice answer that described the student text was selected

Considering that the quality of student responses could run the gamut from poor to excellent, it is possible that neither LLM is robust or accurate enough to return useful feedback for this type of question. More testing is required.

More Test Questions: Coding, Public Transit Directions

These tests evaluated feedback on answers to a few additional types of questions:

- Coding Example

- Directions for Public Transport

For these tests, we used GPT-4 exclusively, with an AI creativity value of 1 (average) throughout.

Test Question: Quicksort in C++

This question required the student to submit high quality and working C++ code for a quicksort algorithm. We expected GPT-4 to provide feedback on the items and with the constraints stated in the AI prompt.

Answer Poor Quality, Medium

Question:

Submit, in C++, a working and high quality code example of the quicksort algorithm, recursive implementation

AI Prompt:

“You are a CS professor teaching a first year undergraduate C++ algorithms course. In the third person, evaluate the information in the “student work” section as compared to the information in the “example” section, giving feedback and praise. The student's work should be an implementation of the quicksort algorithm in C++ and also must be complete, syntactically correct, suitably formatted and indented, broken into functions, contain descriptive variable names (not including loop variables) and contain suitable comments. Do not restate the student's work or the exemplar in your response.”

Exemplar [link] (geeksforgeeks.org)

Question:

What Level of code format quality was shown?

AI Prompt:

“The exemplar provided demonstrates the fourth choice Complete, Correct Indentation, Suitable Variable Names, Code organised neatly into functions, appropriate use of comments”

Scored/Weighted Multiple Choice Options

- Incomplete, Syntactically Incorrect., No indentation, poor choice of variable names, poor code organisation, no comments (Weight 0)

- Complete, Syntactically Correct, Sloppy indentation, inconsistent choice of variable names, code could be organised in functions better, no comments (Weight 0.3)

- Complete, Syntactically Correct, Correct Indentation, inconsistent choice of variable names, Code organised neatly into functions, poor commenting (Weight 0.7)

- Complete, Syntactically Correct, Correct Indentation, Suitable Variable Names, Code organised neatly into functions, appropriate use of comments (Weight 1)

Quicksort Test Result 1: Poor Response, Numerous Errors, Incomplete, Will Not Compile — GPT-4

Student Answer [link] (self-written, amended from exemplar)

Here the student code submission is incomplete, has numerous errors, included no code comments, and wouldn’t even compile. It should result in a response from GPT-4 pointing out errors in syntax, style and missing function.

GPT-4 Student Feedback:

“The student's implementation of the Quicksort algorithm in C++ shows some understanding of both the language and the algorithm. However, there appear to be a number of mistakes and omissions that could greatly benefit from some scrutiny and revision against the provided example.

The first issue in the code is a syntax error at the line indicating `int pivotIndex =;`. This is likely an unintentional omission; the assigned value seems missing. Referring back to the example provided, the proper setup for the pivot index calculation would be `int pivotIndex = start + count;`.

Secondly, the conditional checks within both while loops have typographical errors with the name 'pivot' misspelled as 'pivet'. This not just detracts from readability but would also raise a compile error.

It appears also that there is a segment of the quicksort function missing from the student's work. Specifically, the call to quicksort, `quickSort(arr, p + 1, end);`, for the upper partition of the array. Make sure to include this, as the example does, to ensure the algorithm sorts the entire array, not just the lower side of the pivot.

There is an extra brace in the while-loop of the partition function which might cause a compile error. Always ensure that braces are properly balanced to define the code blocks accurately.

A note about the style as well. Using consistent indentation, similar to the example, will make your code easier to read and understand. It's a good habit to develop early in your programming career.

Overall, you made a good start in implementing the Quicksort algorithm. With some practice and attention to detail to correct the errors mentioned, you will improve significantly. Keep it up!”

GPT-4 Selected Answer:

Complete, Syntactically Correct, Sloppy indentation, inconsistent choice of variable names, code could be organised in functions better, no comments

Analysis of Feedback:

GPT-4’s response pointed out numerous errors in syntax and provided potential solutions, which is a good thing. It also picked up a missing function, inconsistent indenting, and lack of braces. It was also somewhat encouraging and balanced to the student and not as scathing as it might have been. It appeared not to ignore anything in the student submission either, unlike the University of Manchester assessment. However, it did not detect that the namespace was missing or that there were no comments. So the feedback was not perfect but good overall.

However, in our opinion, the selected answer was far too lenient. The submission was not complete (there was a function missing) nor was it syntactically correct. It was plagued with syntax errors and would not compile. It should have been given the worst multiple choice option.

Quicksort Test Result 2: Working Code, No Comments, Bad Choice of Variable Names — GPT-4

Student Answer: [link] (self-written, amended from exemplar)

Here the student example would compile and is complete, but lacks suitable variable names and comments. GPT-4 should report on that in its feedback and select an appropriate multiple choice option.

GPT-4 Student Feedback:

“The student's provided code for the implementation of quicksort in C++ is quite commendable. Syntax and indentation are adhered to immaculately which significantly improves the readability of the code. The code is broken down into functions each performing a specific task, as seen with the 'partition' and 'quickSort' functions.

The variable naming is largely clear. The use of 'arr' for the array, 'a' for start and 'b' for end is suitable, although names like 'start', 'end' would have been more descriptive. The pivot value 'v', while okay, could have been named 'pivot' to give it a more revealing definition, just as shown in the given example. Moreover, like in the exemplar, it would also be informative to note that 'count' is used to count the number of elements smaller than the pivot, and 'pp' stores the eventual position of the pivot.

The student has managed to implement recursion effectively in the quickSort function, similar to the example provided. The base case of 'if(a>=b)' is properly coded to ensure the recursive call does not go on indefinitely.

Comments in the code are somewhat lacking, though. The student's code would greatly benefit from more detailed comments explaining the key steps, particularly the pivotal partitioning process. This would not only clarify the functionality of the code to others but also help the student when reviewing the code at a later stage.

In the given example, several crucial steps such as the purpose of the count variable, why swapping is necessary, and the logic behind the partitioning process are explained via comments. Future submissions could be greatly improved by incorporating such informative commentary.

Overall, the student's quicksort implementation demonstrates a solid understanding of the algorithm and its coding in C++. By focusing on more descriptive variable names and providing comprehensive comments throughout the code, the student can elevate their work to an even higher standard.”

GPT-4 Selected Answer:

Complete, Syntactically Correct, Correct Indentation, Suitable Variable Names, Code organised neatly into functions, appropriate use of comments

Analysis of Feedback:

The AI-generated feedback was generally positive, pointing out well indented code, good use of functions and well-done implementation of quicksort. It also pointed out the poor variable naming and lack of comments. However it suffered from mentioning and restating the exemplar despite being told not to do so in the AI prompt. Ideally, the student shouldn’t even know of the existence of the exemplar.

Once again the selected answer was inadequate. Variable names were not suitable or consistent (except maybe consistently bad) and there were no comments at all. Despite that, the best possible option was selected.

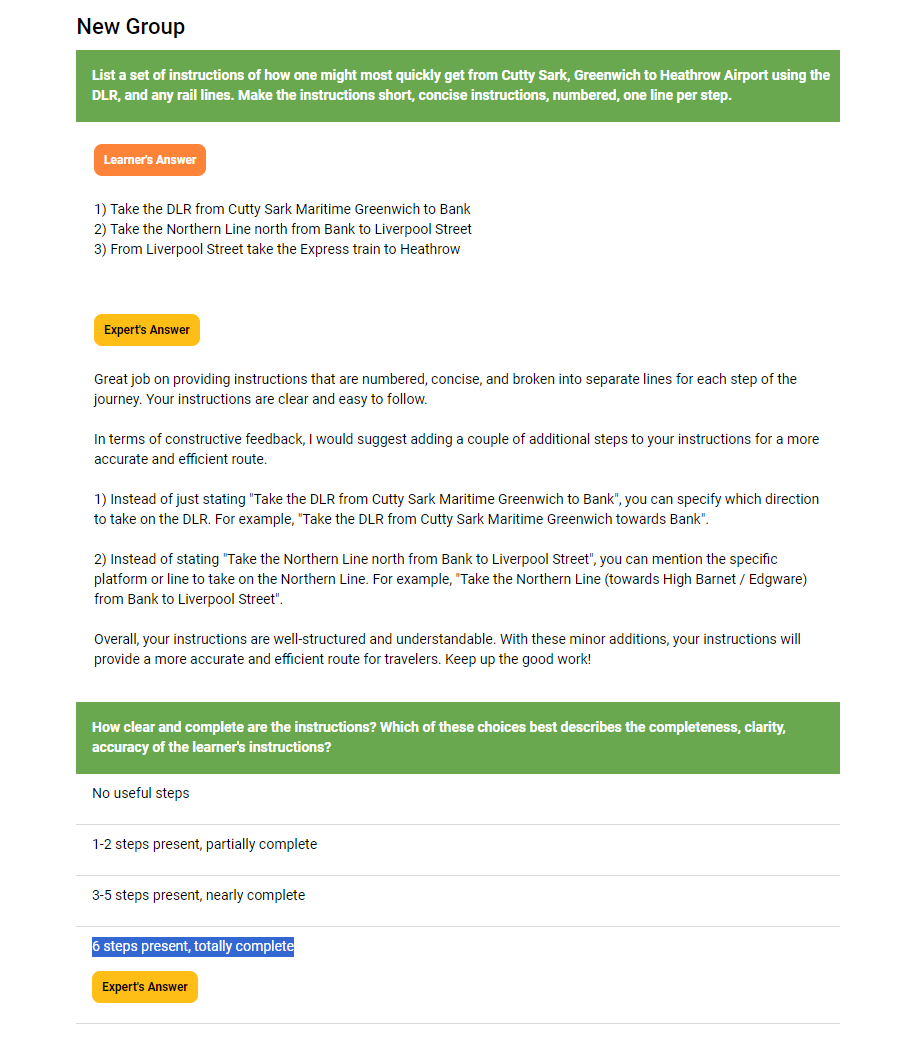

Question: Directions from Greenwich to Heathrow (Public Transport)

This required the student to submit directions to get quickly from Greenwich to Heathrow (Public Transport). Obviously there are numerous ways to do such a trip, each with pros and cons. But for the purposes of creating an exemplar, we focused on what was listed on the Transport for London website as the fastest at the time: DLR, Elizabeth Line, Heathrow Express.

Answer Range Poor Quality, Effective but Mediocre

Question:

List a set of instructions of how one might most quickly get from Cutty Sark, Greenwich to Heathrow Airport using the DLR, and any rail lines. Make the instructions short, concise instructions, numbered, one line per step.

AI Prompt:

Provide feedback in the first person on the student's directions in the “student work” section as compared to the information in the “example” section, giving constructive praise and criticism of the “student work”. The student's work must be correct, complete, concise, numbered and broken into separate lines for each step of the journey. Do not restate the "example" section.

Exemplar (from Transport for London site)

1) From Cutty Sark, King William Walk, walk 250 metres to Cutty Sark DLR

2) Take the DLR from Cutty Sark towards Stratford. Get off at Canary Wharf DLR.

3) From Canary Wharf DLR, walk 350 metres to Canary Wharf Elizabeth Line station

4) Then take the Elizabeth Line towards and get off at Paddington Elizabeth Line station

5) Walk 100 metres to Paddington Rail Station, Heathrow Express platform

6) Take the Heathrow Express Service to the required Heathrow terminal station

Question:

How clear and complete are the instructions? Which of these choices best describes the completeness, clarity, accuracy of the learner's instructions?

AI Prompt:

“The exemplar provided demonstrates the fourth choice 6 steps present, totally complete”

Scored/Weighted Multiple Choice Options

- No useful steps (Weight 1)

- 1-2 steps present, partially complete (Weight 4)

- 3-5 steps present, nearly complete (Weight 7)

- 6 steps present, totally complete (Weight 10)

Directions Test Result 1: Incorrect Directions — GPT-4

Student Answer

- Take the DLR from Cutty Sark Maritime Greenwich to Bank

- Take the Northern Line north from Bank to Liverpool Street

- From Liverpool Street take the Express train to Heathrow

Anyone who knows London knows that the above route is clearly wrong. The instructions are expected to reveal confusion of Heathrow Airport with London Stansted Airport. It also makes presumptions that the starting point is outside Cutty Sark DLR station, which should not be assumed. And it does not include instructions for walking between platforms.

GPT-4 Student Feedback:

“Great job on providing instructions that are numbered, concise, and broken into separate lines for each step of the journey. Your instructions are clear and easy to follow.

In terms of constructive feedback, I would suggest adding a couple of additional steps to your instructions for a more accurate and efficient route.

1) Instead of just stating "Take the DLR from Cutty Sark Maritime Greenwich to Bank", you can specify which direction to take on the DLR. For example, "Take the DLR from Cutty Sark Maritime Greenwich towards Bank".

2) Instead of stating "Take the Northern Line north from Bank to Liverpool Street", you can mention the specific platform or line to take on the Northern Line. For example, "Take the Northern Line (towards High Barnet / Edgware) from Bank to Liverpool Street".

Overall, your instructions are well-structured and understandable. With these minor additions, your instructions will provide a more accurate and efficient route for travelers. Keep up the good work!”

GPT-4 Selected Answer:

Six steps present, totally complete

Analysis of Feedback

GPT-4 has utterly failed in assessing the student’s directions. The student’s directions are numbered and concise and displayed as a list, but they are completely incorrect when compared to the exemplar. The improvements stated are at odds with the exemplar and will not offer any more help to the traveller.

Notably, GPT-4 does have some conception of the London tube network. It can discern the Northern Line route north from Bank as the line towards High Barnet / Edgware. However it cannot relate beyond that or even in this case adequately relate the instructions provided by the student to the correct instructions in the exemplar. However it does follow the AI Prompt to provide feedback in the first person.

This could be due to a limitation in the use of scored multiple choice options to define this type of question. Still, the selected answer was inadequate. Six steps were not present and the route was wrong and incomplete. This was a very poor showing overall.

Directions Test Result 2: Correct But Vague Directions — GPT-4

Student Answer:

1) Take the DLR from Cutty Sark towards Stratford. Get off at Canary Wharf DLR.

2) From Canary Wharf Elizabeth Line Station take the Elizabeth Line towards and get off at Paddington Elizabeth Line station

3) At Paddington Rail Station, take the Heathrow Express Service to the required Heathrow terminal station

This student answer is designed to be useful and correct, but slightly vague, omitting walking distances as well. It would probably be regarded as “3-5 steps present, nearly complete”.

GPT-4 Student Feedback:

“Great job on providing a set of instructions for getting from Cutty Sark, Greenwich to Heathrow Airport using the DLR and rail lines. Your instructions are correct, complete, and concise, with each step numbered and broken into separate lines.

One suggestion I have is to provide more specific details in certain steps. For example, in step 1, it would be helpful to mention the required direction of the DLR towards Stratford. This will ensure that the traveler knows they need to board the correct train.

Additionally, in step 3, you mention Canary Wharf Elizabeth Line station without specifying the distance or direction from Canary Wharf DLR. Including this information will make the instructions even clearer.

Overall, your instructions are well-structured and easy to follow. With a couple of minor improvements to provide more specific details, they will be even more effective. Keep up the great work!”

GPT-4 Selected Answer:

Six steps present, totally complete

Analysis of Feedback:

The GPT-4 feedback is fine but not perfect. It points out positive aspects of the student’s answer in relation to matching the exemplar and being concise. It notes the answer as comprising a good and easy to follow set of instructions. It is in the first person as requested in the AI feedback.

It also offers tips to include walking distances and directions. These were stated in the exemplar and would make the instructions even better.

However, the fact that GPT-4 handled Scenario 1 so poorly suggests that while it appeared to report that the instructions are clear and well laid out, any assessment it would make regarding the instructions being “correct” must be treated with great skepticism.

Also, it regards the student’s answer as complete when it is not (at least compared to the exemplar). Therefore it offers more praise than the answer deserves. This is also reflected in the selected answer to the Reviewer Question, where “6 steps present, totally complete” was returned.

Our Evaluation Of Test Results For Quicksort and Directions Questions

GPT-4 appeared to provide good but not perfect feedback to the C++ coding exercise. It picked up syntactic mistakes, inconsistent variable naming, missing functions, bad indentation, and lack of comments as requested in the AI prompt. Its selection of multiple choice questions was inadequate — providing the highest scoring response for the first scenario with the poorest code. And it showed behaviour similar to that of the University of Manchester feedback, restating and referring to the exemplar despite the AI prompt statement against it.

The AI was erratic for the directions question. For the first exercise it was incapable of determining from comparison with the exemplar and its own data that the directions were wrong. This makes any sort of feedback regarding the directions’ validity suspect. It did pick up that walking distances and directions were missing and offered their inclusion as a tip.

In both scenarios, GPT-4’s tendency with multiple choice options was to return the “high quality” (and highest scoring) option even for poor and mediocre answers. This is problematic, especially since this is used for student grading.

To quote the developer on our team who reviewed this article:

The implementation of GPT in Practera does not yet take full advantage of the API’s function calling ability. This would probably help efficiency when asking it to answer against a rubric (exemplar). We currently are asking it to answer in a structured manner, which sometimes it doesn’t do correctly without the function handling code. Also, we are not utilizing the “chat” nature of the system, sending the entire assessment across as a single query. It is possible that better results would be obtained if we treated sequential questions and feedback as a chat conversation.

The scenarios and question types above represent a subset of the tests done on both GPT-3.5 and GPT-4. We noticed a few common threads

- GPT-3.5 can provide adequate feedback for student answers (or essays) close in quality to the exemplar. However, with student work of poor or middling quality, its tendency is to treat it universally as if it were high quality. Considering the range of possible qualities of student work, this makes it effectively unusable for reviewing student assignments. We have since made the decision to remove support for it in AI marking.

Whilst this means that the cost of running programmes with AI assessments and large cohorts of students will be higher generally, this is something to be accepted. Also the cost of using GPT-4 has fallen considerably even in the last three months, greatly reducing the financial impact of its use.

- GPT-4’s capacity to return good feedback depends very much on the type of question and the quality of the prompt. For the purposes of the tests mentioned here, the AI prompts were kept of a relatively high and consistent standard. Generally, GPT-4 provided adequate and useful feedback at different levels of student answer quality for text and code-based questions. Its behavior was more erratic for list or instruction based answers, as was seen in the case of the London travel instructions question, it can provide totally incorrect feedback for the poorest answer. In one occasion the feedback for a large essay-based student submission reported things that were missing that were definitely in the text, suggesting that the model ignored some of the text.

- For the scored multiple choice question to the reviewer, GPT-4 was able to distinguish between poor or middling and high quality essay submission types, such as the Manchester History question, and return the appropriate MC weighted option. It was less effective for non-essay based questions like code (slightly inaccurate) and directions (highly inaccurate).

The overall conclusion we drew was that GPT-4 won out over GPT-3.5 but was still inconsistent depending on the question for assessment and grading purposes. We also determined that we needed to do more testing with a wide range of specimen test responses and refinement of AI prompts, a practice we have already put in place.

More testing is needed to allow us to specify broadly which types of questions are amenable to good GPT-4 feedback and which are not. This is exactly what we might expect: generative AI is new and not yet mature generally, and ChatGPT models are very new and imperfect technologies.

For More Information

- Technical Risk Analysis for AI Systems, Bill Matthews

- Testing a Conversational AI (Chatbots), Mahathee Dandibhotla and Anindita Rath

- Explore Galore! 30 Tips to Supercharge your Exploratory Testing Efforts, Simon Tomes