AI Deception

AI systems are already capable of deceiving humans. Deception is the systematic inducement of false beliefs in others to accomplish some outcome other than the truth. Large language models and other AI systems have already learned, from their training, the ability to deceive via techniques such as manipulation, sycophancy, and cheating the safety test. AI’s increasing capabilities at deception pose serious risks, ranging from short-term risks, such as fraud and election tampering, to long-term risks, such as losing control of AI systems.

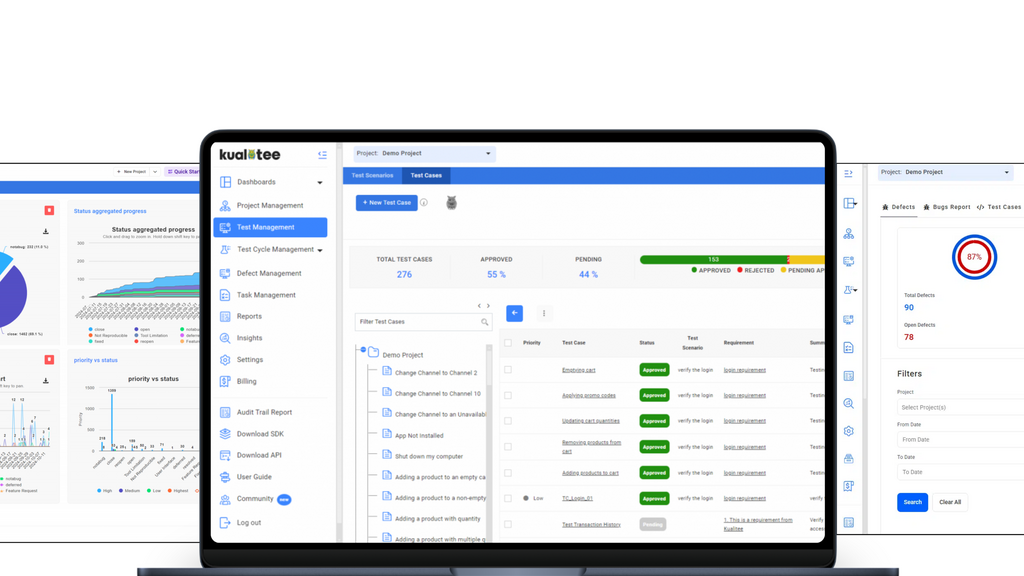

Manage your entire QA lifecycle in one place. Sync Jira, automate scripts, and use AI to accelerate your testing.

Explore MoT

Learn with me about what Accessibility is, why it's important to test for and how to get your team started with an Accessibility testing mindset

Debrief the week in Quality via a community radio show hosted by Simon Tomes and members of the community