Introduction: When “best practice” isn't an option

Most testing literature assumes a certain baseline, a dedicated test team, robust environments, an automation framework, tool budgets and time for regression cycles.

But what happens when none of that exists?

- A startup with six engineers and no testing resource

- A Non-Governmental Organisation (NGO) delivering a donor-funded system with strict cost ceilings

- An embedded device team with only two physical prototypes

- A public sector modernisation project that is operating on legacy infrastructure

- A distributed team using AI (Artificial Intelligence) tools aggressively but without governance

In highly constrained environments, traditional testing models are not just impractical. They are impossible. Lean testing is not about cutting quality. It is about engineering quality deliberately under pressure. It requires sharper prioritisation, stronger collaboration, pragmatic tooling, and disciplined decision-making, whilst increasingly it requires knowing how to control AI-generated output rather than being overwhelmed by it.

In this article, you’ll hear the story of how a team moved from constant firefighting and overwhelming regression effort to a much more focused way of working, where only the most critical flows were protected, and everything else was treated with proportionate effort. Along the way, they simplified documentation, used automation more carefully, brought testing earlier into conversations and learned to use AI as a helpful assistant rather than something to blindly trust.

A story of change: Zyphera Finance’s turning point

Before we get stuck into the article, here’s what you need to know about Zyphera Finance. They are a fictional fintech company focused on delivering modern digital financial solutions. Like many emerging firms in this industry, they are navigating a fast-changing environment shaped by innovation, regulation, and rising customer expectations.

But the reality they face is that none of the “ideal” conditions is in place for them. Instead, they are working with constraints that are increasingly common across the industry. A startup with a few developers, a single testing resource, an NGO and a large constraint on funding for tools, whilst also trying to introduce AI aggressively.

When Zyphera Finance reached its second year, its CTO described their testing approach with a mix of frustration and honesty.

We weren’t testing, we were reacting.

The team was small. Eight engineers and one tester. Features were shipping quickly, but each release brought uncertainty. Regression lived in long spreadsheets that no one fully executed, and “test everything” had quietly become the expectation, even though it wasn’t achievable.

The tester carried the weight of that expectation. All features were exploratory and regression-tested from end-to-end, often under time pressure. Bugs were logged in large numbers, but many were low-impact. Meanwhile, more subtle and critical failures slipped through, especially in edge cases that no one had time to think through properly.

Over time, the situation affected more than just quality. Developers began to see testing as a bottleneck. Production issues continued to surface. Confidence dropped, and with it, trust in the process.

Six months later, the same CTO told a different story.

Nothing about our team size changed. We just stopped pretending we could test everything.

The shift began with focus. The team identified a handful of flows that simply could not fail authentication, payments, onboarding, data export and the core user journey. These became the centre of their testing strategy.

From there, they simplified everything else. Documentation was reduced to what was actually useful. Automation was applied only to stable, high-value areas. Lightweight smoke tests were introduced into their CI pipeline, giving fast feedback without heavy overhead.

Exploratory testing became more intentional, guided by clear goals instead of being squeezed in at the last minute. AI tools were introduced as helpers, not decision-makers, supporting the team without replacing their judgement.

The result wasn’t perfection, but it was clarity, and that clarity changed how the entire team worked.

Understanding constraints in practice

Constraint rarely comes in a single form. For some teams, it’s a financial issue. There’s no budget for enterprise tools or large-scale environments. For others, it’s time, with deadlines driven by investors, donors or urgent delivery needs.

In many cases, the limitation is people. Teams may have no dedicated testers or rely on junior engineers who are still developing their instincts. High turnover or distributed working can make consistency difficult.

Infrastructure can also impose limits. There may be only one staging environment, very little test data or in some cases, only a handful of physical devices to test on. These realities make traditional testing models impractical.

More recently, AI has introduced a different kind of constraint. Development speed has increased, but so has the volume of code and the potential for hidden complexity. AI-generated logic can look correct while still containing subtle flaws, shifting the burden of testing to catch a new category of issues.

Across all of these scenarios, the conclusion is the same. Trying to test everything leads to testing nothing well.

Letting risk drive the work

The real shift at Zyphera Finance happened when the team accepted that not all failures are equal. Instead of spreading effort thinly, they began focusing on where failure would actually matter.

They started by asking simple but uncomfortable questions. What would damage user trust? What would cost money? What would stop the system from functioning in a meaningful way? These questions helped identify their critical paths, the parts of the system that deserved the most attention.

From there, they began to think about risk more deliberately. Features weren’t just “important” or “not important”, they were evaluated based on impact, likelihood and how easily a failure could be detected.

That thinking translated into a more intentional allocation of effort.

Risk Level |

Test Depth |

| High | Unit, integration, regression, exploratory |

| Medium | Targeted functional + regression |

| Low | Smoke + basic validation |

What changed wasn’t just how much testing was done, but where it was focused. The team became comfortable giving less attention to low-risk areas so they could protect what mattered most.

Building quality earlier

Another important change came from shifting attention earlier in the development process. Instead of relying on testing to catch problems at the end, the team began addressing them early.

Testers became involved in shaping work, not just validating it. Developers took more responsibility for verifying their changes before merging. Product discussions started to include clearer thinking about edge cases and failure scenarios.

These changes didn’t require a heavy process. Often, they were just short conversations that clarified assumptions before work began. That small investment of time consistently prevented larger issues later.

Redefining ‘enough’

Documentation was one of the first areas to be simplified. Previously, the team had produced detailed test plans and step-by-step scripts, but much of it went unused.

Under constraint, that level of detail became unsustainable. Instead, they focused on creating artefacts that supported real decisions. Risk matrices replaced long documents. Critical flows were captured in simple checklists. Exploratory testing was guided by short, focused charters.

The shift was less about reducing effort and more about removing waste. Documentation became lighter, but also more effective.

Automation with purpose

Automation remained important, but its role became clearer. Instead of aiming for broad coverage, the team focused on protecting their most critical flows.

Authentication, payments and core business logic were prioritised. Smoke tests provided quick feedback on deployments, helping the team catch obvious failures early.

At the same time, they resisted the urge to automate everything. Unstable interfaces and rapidly changing features were left alone until they matured. This reduced maintenance overhead and kept automation sustainable.

Automation stopped being something to chase and became something to use carefully.

Working with AI thoughtfully

AI tools quickly proved useful, particularly for generating tests, suggesting scenarios and handling repetitive tasks. However, they also introduced risks that weren’t immediately obvious.

Outputs could look convincing while being subtly incorrect. Domain-specific nuances were often missed. In some cases, reliance on AI led to less, rather than more, critical thinking.

The team adapted by setting clear boundaries. AI-generated work was always reviewed. Assumptions were checked against real system behaviour. Over time, patterns in AI mistakes became easier to recognise.

Rather than replacing human judgment, AI became a tool that amplified it, when used carefully.

Tooling on a budget

Constraint has a way of forcing teams to think more creatively about the tools they use. At Zyphera Finance, the lack of budget didn’t stall progress. It sharpened decision-making. Instead of investing in expensive, all-in-one testing platforms, the team assembled a lightweight stack that did exactly what they needed and nothing more.

They relied on open-source automation frameworks that were easy to adapt and maintain. Test tracking lived alongside the codebase, using Git to keep everything visible and versioned without introducing additional tools. Their CI pipeline handled simple smoke validation on every deployment, giving fast feedback without unnecessary complexity. Where visibility was needed, they relied on straightforward logging and alerting scripts rather than heavy monitoring platforms. For broader testing needs, they used cloud-based credits instead of committing to fixed infrastructure they couldn’t fully utilise.

Over time, it became clear that the effectiveness of their approach had little to do with the tools themselves. What made the difference was how deliberately those tools were used. The team realised that discipline, knowing what to build, what to ignore, and how to maintain it, mattered far more than access to expensive platforms.

Making quality a shared responsibility

As the approach matured, testing stopped being associated with a single role. Responsibility became more evenly distributed across the team.

Developers took ownership of unit-level validation and ensured their work met the agreed criteria. Product owners clarified expectations and helped define acceptable levels of risk. Testers focused on guiding strategy, exploring complex scenarios and maintaining visibility over critical flows.

This shift reduced bottlenecks and strengthened collaboration. Quality became something the team built together, rather than something handed off at the end.

Testing embedded and infrastructure-limited systems

For hardware-constrained teams:

- Use simulators aggressively

- Mock external systems

- Test firmware logic in isolation

- Prioritise integration over UI

- Reuse test environments strategically

When hardware is scarce, software isolation becomes critical.

Measuring what matters

One of the more subtle changes was how success was measured. Traditional metrics such as test case counts or total bugs logged became less meaningful over time.

Instead, the team focused on indicators that reflected real outcomes.

Metric |

Why It Matters |

| Escaped high-severity defects | Shows where risk is not controlled |

| Time to detection | Reflects how quickly issues surface |

| Time to recovery | Indicates resilience |

| Stability of critical flows | Tracks core system health |

| Regression duration trends | Highlights efficiency |

These measures provided insight into how well the system was performing, rather than how much activity was taking place.

Psychological shifts in lean testing

Looking back, the transformation was as much psychological as it was practical. The team moved away from the idea that testing’s role was to catch everything and towards a mindset of preventing what truly mattered.

| From | To |

| Testing catches bugs | The team prevents failures |

| Test everything | Test what matters most |

| Automation equals maturity | Focused automation equals leverage |

| AI will fix it | AI must be supervised |

Special considerations for NGOs and mission-driven systems

For organisations under donor constraints:

- Emphasise reliability over feature expansion

- Prioritise user trust and data protection

- Keep processes transparent

- Document risk rationale clearly for accountability

Quality becomes part of an ethical responsibility.

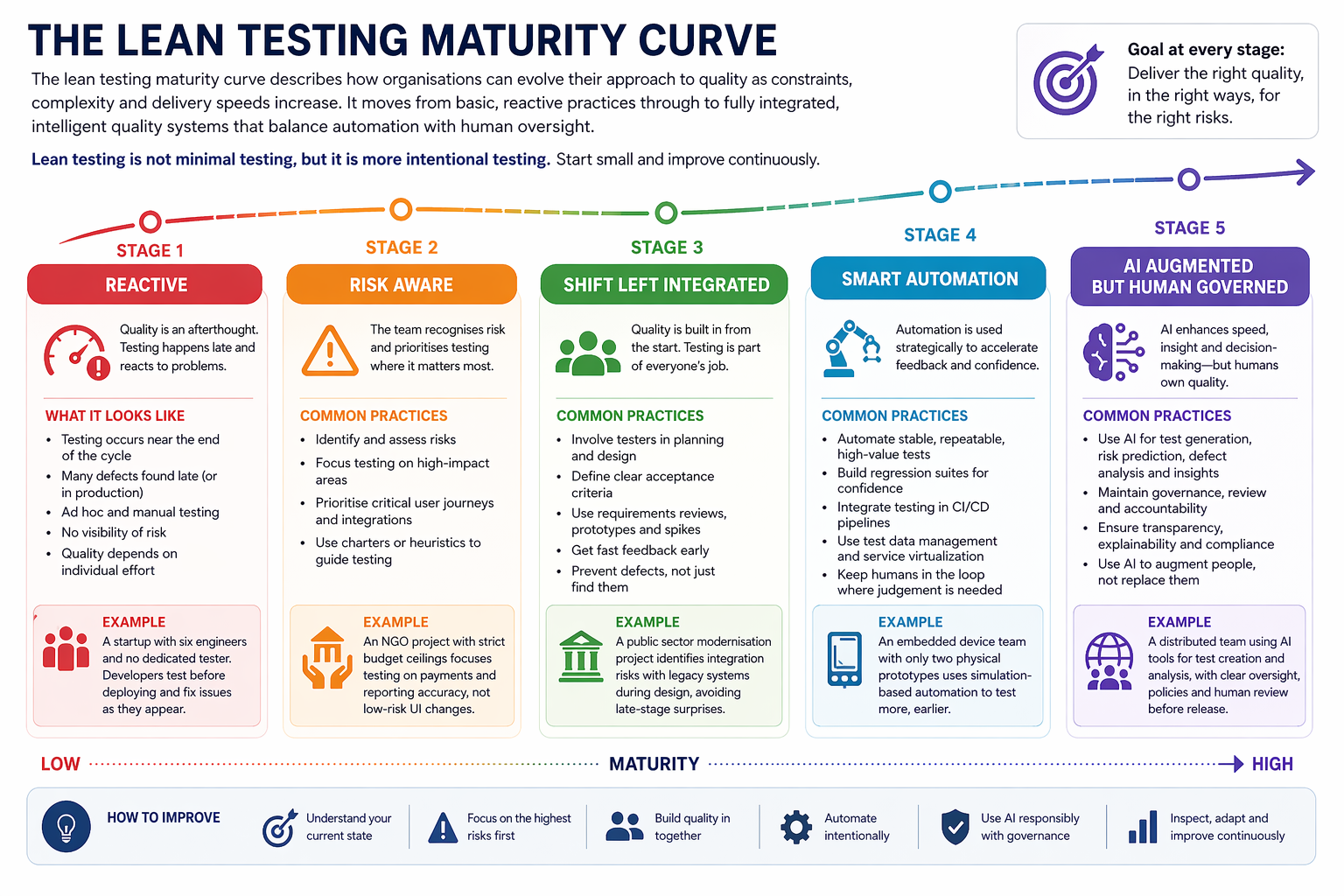

The lean testing maturity curve

The Lean testing maturity curve describes how organisations can evolve their approach to quality as constraints, complexity and delivery speeds increase. It moves from basic, reactive practices through to fully integrated, intelligent quality systems that balance automation with human oversight. The curve is comprised of 5 stages, outlined below. Each stage reflects a growing level of maturity in how testing is planned, executed and embedded within the development lifecycle. Importantly, lean testing is not about doing less testing, but it is about testing more intentionally, focusing effort where it delivers the most value while reducing waste and inefficiency.

Stage one: Reactive

At this stage, testing happens late in the delivery process and is often treated as a final checkpoint rather than a continuous activity. Teams usually respond to issues after they have happened, with defects discovered during release prep or, in some cases, by customers in production.

Common signs of this stage include manual regression testing under time pressure, unclear ownership and little visibility of risk until something goes wrong. Testing is often dependent on individual effort rather than a defined strategy.

For example, a startup with six engineers and no dedicated tester may rely entirely on developers performing ad hoc checks before a deployment.

Stage two: Risk aware

Teams begin to recognise that not everything can or should be tested equally. Instead of trying to test everything, they start identifying the areas of highest business, operational, or compliance risk and prioritise effort accordingly.

This stage introduces more intentional decision-making. Critical workflows, high-impact integrations and customer-facing journeys receive more attention, while low-risk areas are tested more lightly.

An example of this would be a donor-funded NGO project with strict budget ceilings that may focus heavily on payment processing and reporting accuracy, while reducing effort on lower-priority interface changes.

Stage three: Shift left integrated

Testing is no longer something that happens at the end. It becomes part of design, planning and development from the start. Quality is shared across the team with developers, testers, product owners and stakeholders collaborating earlier.

Requirements are challenged sooner, acceptance criteria become clearer, and issues are prevented rather than discovered late.

For example, a public-sector modernisation project working on legacy infrastructure may benefit from this approach. By identifying integration risks during planning rather than during release testing, it in turn reduces delay costs.

Stage four: Smart automation

At this stage, automation is used strategically rather than simply for coverage. Teams understand that automating everything creates waste, so they focus on stable, repeatable, high-value tests that support fast feedback and confident releases.

This often includes API testing, regression suites, pipeline validation and monitoring of critical business journeys. Manual testing is still important, but it is used where human judgment adds the most value.

For example, an embedded device team with only two physical prototypes may automate simulation-based testing to reduce dependency on limited hardware access.

Stage five: AI augmented but human governed

The most mature stage introduces AI to improve speed, insight and decision-making without removing human accountability. AI may support test generation, analysis, risk prediction, and release confidence scoring, helping teams work more efficiently.

However, governance then becomes essential. Teams must ensure outputs are reviewed, bias is understood, and compliance requirements are still met. AI supports quality. It does not own it.

A distributed team using AI tools aggressively without governance may initially gain speed, but true maturity comes when those tools are used with clear oversight, accountability and trust.

Lean testing is not minimal testing, but it is more intentional testing. Start small and improve continuously.

To wrap up

Constraints are an advantage. In the end, the limitations the team faced became a source of strength. Without excess resources, they were forced to prioritise. Without the ability to test everything, they learned to test what mattered.

Lean testing didn’t attempt to replicate enterprise models on a smaller scale. Instead, it created a different approach, one that was focused, collaborative and grounded in real-world constraints.

As software continues to evolve and delivery speeds increase, this kind of clarity is becoming less of an alternative and more of a necessity.

What do YOU Think?

Lean testing is often introduced as a way to improve efficiency and delivery speed, but its real impact on how teams approach quality isn't always immediately clear. As organisations move through different levels of maturity, from reactive testing to AI-augmented to human-governed quality systems, the way they think about risk, automation and responsibility begins to shift significantly. If you're working in environments like startups, public sector programmes, or distributed teams under constraints, I’d be interested to hear how these pressures are shaping your testing approach. Use the stages in this article as a framework for reflection on where you are today and where you might be heading next.

Questions to discuss

- How does your team prioritise testing when time or budget is tight?

- Have you formally adopted risk-based testing, or is prioritisation still informal?

- Are you seeing AI improve quality in your workflow—or introduce new categories of risk?

- How do you validate AI-generated tests or code in your team?

- What guardrails do you have in place to prevent over-reliance on automation?

- In small or cross-functional teams, how is quality ownership distributed?

- What’s one lean practice that made the biggest difference in your workflow?

- Have you reduced documentation or process overhead without sacrificing clarity? How?

- What metrics do you rely on to measure quality impact—and are they truly meaningful?

- If you work in a highly constrained environment (startup, NGO, embedded systems, public sector), what unique QA challenges do you face?

- What’s the biggest misconception you’ve encountered about “lean” testing?

- If you could change one thing about how your organisation approaches testing under pressure, what would it be?

Actions to take

- Identify your five most critical flows

- Identify and map your highest risk features

- Remove one unnecessary documentation artefact

- Automate one high-value regression path

- Introduce structured exploratory testing

- Review how AI is currently used in your workflow

- Reassign quality ownership explicitly across roles

- Define three meaningful metrics