Matthew Whitaker

QA Team Lead

He/Him

QA professional with 9+ years in various industries. I enjoying implementing testing frameworks in manual and automated testing. Passionate about collaboration and improvement

Achievements

Certificates

Awarded for:

Achieving one or more Community Stars in five or more unique months

Activity

earned:

Lean testing under pressure: How small teams deliver high-impact quality in highly constrained environments

earned:

Lean testing under pressure: How small teams deliver high-impact quality in highly constrained environments

earned:

Lean testing under pressure: How small teams deliver high-impact quality in highly constrained environments

earned:

Lean testing under pressure: How small teams deliver high-impact quality in highly constrained environments

earned:

Lean testing under pressure: How small teams deliver high-impact quality in highly constrained environments

Contributions

Implement lean testing strategies that enable small teams to deliver high-quality software efficiently within resource-constrained environments.

Identify sources of unnecessary cognitive load and apply strategies to focus on meaningful analysis and exploration.

Cognitive load refers to the amount of mental effort required to perform a task, including everything a tester must keep in mind while testing, such as requirements, system behaviour, test data, environments, tools, expected results, and potential failure modes. Cognitive load is often described in three different forms:

Intrinsic cognitive load: This comes from the inherent complexity of the system being tested. Distributed systems, complex business rules, edge cases, and integrations naturally demand more mental effort.

Extraneous cognitive load: This is unnecessary mental effort caused by poor tooling, unclear requirements, fragmented documentation, inconsistent environments, or inefficient processes. Unlike intrinsic load, this type is avoidable.

Germane cognitive load: This is the productive mental effort spent learning, problem-solving, and building mental models of the system. This is the load on which we want testers to spend their energy.

It would be impossible to eliminate cognitive load entirely. Instead, effective testing requires reducing extraneous load so testers can devote their finite mental capacity to meaningful analysis and exploration.

Understand why testing must evolve beyond deterministic checks to assess fairness, accountability, resilience and transparency in AI-driven systems

Self-adaptive systems are structured to change their behaviour while running. They do this in response to changes in their environment or within the system itself, for example, to keep working properly when conditions change or when unexpected situations occur. The goal is usually to reduce the need for manual intervention and let the system handle uncertainty on its own.

Probabilistic behaviour refers to a system's behaviour governed by probability rather than being fully deterministic. In such systems, the same input or situation may lead to different outcomes, each with a certain likelihood. This approach is commonly used to model uncertainty, variability or incomplete information, for example, in machine learning systems, stochastic simulations or adaptive systems that must operate under uncertain conditions.

Boost your career in quality engineering with the MoT Software Quality Engineering Certificate.

Pairing QA Engineers and Analysts isn't just a process tweak, it's a cultural shift that transforms siloed testing into a collaborative, balanced and business-aligned effort.

I’m excited to share that, alongside TeamMoT, we’ll be gathering 50 Leaders in Quality on September 30th.

What looks like a one-day event is actually the beginning of a bigger journey: a committ...

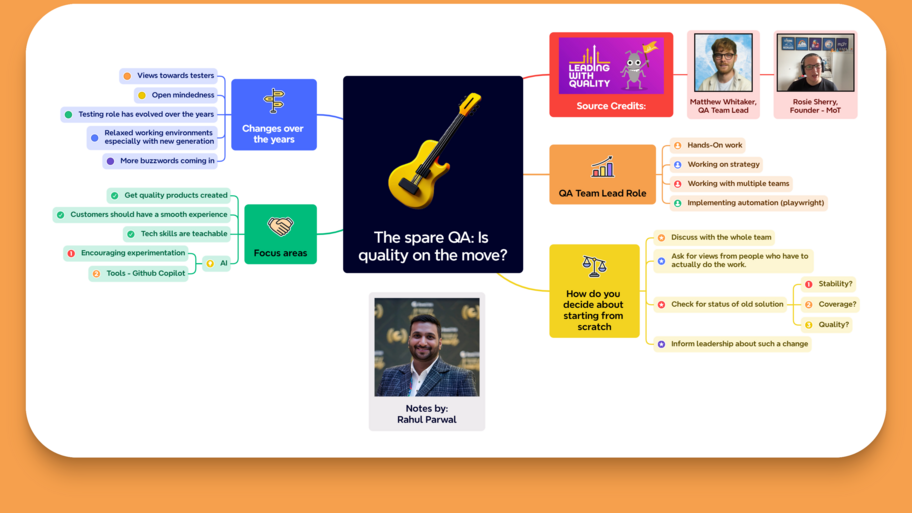

Notes of key moments and learnings from Leading with Quality - Podcast, where the guest was Matthew Whitaker.

You can watch the podcast series here: https://www.ministryoftesting.com/collections...

See how how role titles, team trust, and a strategic ‘spare QA’ help scale quality across legacy systems and ways of working