Staying in Control with AI Agents

02 May 2026

In this moment:

Rahul Parwal

AI Chapter

The AI didn't make a mistake. You did. You trusted it.

The AI doesn't know your quality bar. It doesn't know your edge cases. It doesn't know what "good enough" means in your codebase.

It only knows what you tell it. And most people tell almost nothing.

The engineers staying in control are doing something different:

1. They use plan mode:

- Understand → approach → files to change → assumptions → next steps.

- Misalignment caught early costs 0. Misalignment caught after the output is generated costs a lot.

2. They git diff before they push.

- Git diff is not optional. It's your first line of defense.

- Trust the agent. But diff the output. Always.

3. They audit agents with agents.

- Agent A writes the code. Agent B reviews it.

- Audit angles: correctness, edge cases, best practices.

4. They define the quality bar explicitly.

- The AI will meet your bar. But only if you set it.

- Vague expectations → vague output → vague regret.

This is the process most people skip.

TL;DR below 👇

→ Good prompt → Plan → Diff → Review → Ship

The people who figured this out aren't writing more prompts.

You can learn this and more from this article 👇. Read it before your next agent session.

How to Stay in Control of AI Coding Agents: A Practical Guide for Testers Using Claude Code and GitHub Copilot | ShiftSync Community

The AI doesn't know your quality bar. It doesn't know your edge cases. It doesn't know what "good enough" means in your codebase.

It only knows what you tell it. And most people tell almost nothing.

The engineers staying in control are doing something different:

1. They use plan mode:

- Understand → approach → files to change → assumptions → next steps.

- Misalignment caught early costs 0. Misalignment caught after the output is generated costs a lot.

2. They git diff before they push.

- Git diff is not optional. It's your first line of defense.

- Trust the agent. But diff the output. Always.

3. They audit agents with agents.

- Agent A writes the code. Agent B reviews it.

- Audit angles: correctness, edge cases, best practices.

4. They define the quality bar explicitly.

- The AI will meet your bar. But only if you set it.

- Vague expectations → vague output → vague regret.

This is the process most people skip.

TL;DR below 👇

→ Good prompt → Plan → Diff → Review → Ship

The people who figured this out aren't writing more prompts.

You can learn this and more from this article 👇. Read it before your next agent session.

How to Stay in Control of AI Coding Agents: A Practical Guide for Testers Using Claude Code and GitHub Copilot | ShiftSync Community

Rahul Parwal

Test Specialist

Rahul Parwal is a Test Specialist with expertise in testing, automation, and AI in testing. He’s an award-winning tester, and international speaker.

Want to know more, Check out testingtitbits.com

Sign in

to comment

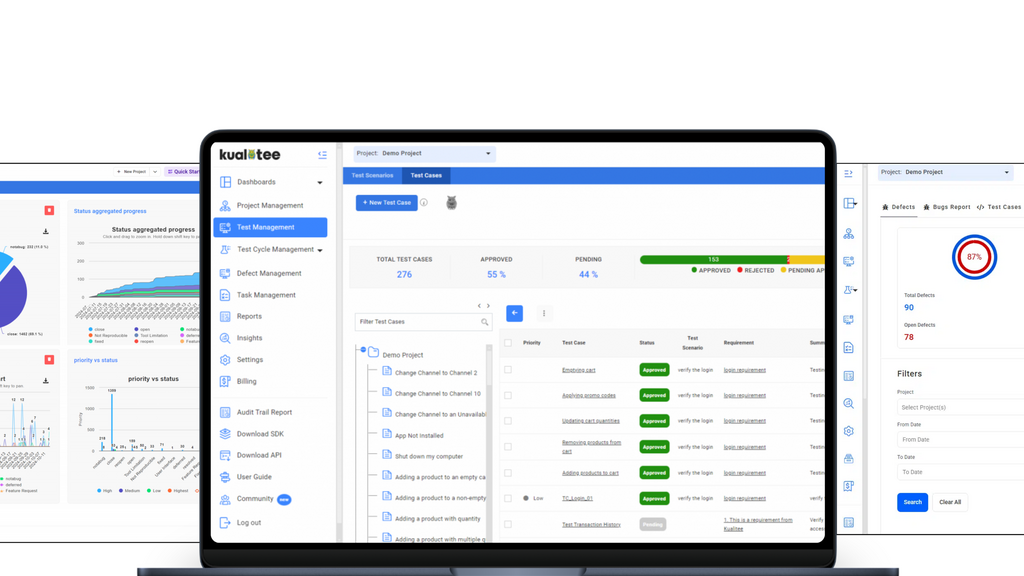

Manage your entire QA lifecycle in one place. Sync Jira, automate scripts, and use AI to accelerate your testing.

Explore MoT

AI in QA shouldn’t mean more work to manage. Join us for this hands-on workshop where we show how to maximize the AI capabilities in QMetry and Reflect.

Advanced prompting skills to turn AI into your trusted testing companion.

Into the MoTaverse is a podcast by Ministry of Testing, hosted by Rosie Sherry, exploring the people, insights, and systems shaping quality in modern software teams.