Activity (50)

Article (348)

Ask Me Anything (55)

Chat (72)

Club Topic (28)

Course (38)

Discussion Panel (44)

Feature Spotlight (58)

Term (699)

Into The MoTaverse Episode (27)

Certification (91)

Collection (279)

Masterclass (92)

Moment (4392)

Newsletter Issue (243)

News (333)

Satellite (3219)

Solution (81)

Talk (251)

MoTaCon Session (873)

Testing Planet Session (105)

Insight (234)

TWiQ Episode (315)

The Testing Mindset (in my humble opinion) is an umbrella term that covers a number of different mindsets, or ways of thinking about a subject. As I said in my September 2025 article (see reference), "From the beginning of my software testing career in 2003, I’d repeatedly heard about the ‘tester's mindset.’ There were very few actual definitions and none that I felt fit well. I did some research to see if someone specific coined the term, but it is more likely that it evolved over time." In that article, I suggested that, as testers or quality professionals, we should have more than one mindset. I gave 11 examples of software testing mindsets, and since then, I've added another. I asked in the article for people to suggest their own or expand on them. Maybe you can add to this glossary term with your own ideas and mindsets. Or, you may see it differently, which is great. Debate pushes the craft forward. Here is the latest list as of April 2026. They are now grouped and I hope to share them as a model in the future.

Visionary and open

Blue-sky (innovator)

Creative (visionary)

Exploratory (investigator)

Inclusive (ally)

Analytical and grounded

Scientific (realist)

Sceptical (analyst)

Critical (evaluator)

Risk-based (strategist)

Philosophical and connected

Ethical (moralist)

Holistic (connector)

Collaborative (partner)

Dark and aggressive Malevolent (saboteur)

Magic values are values hard-coded directly in your tests without any code extra comment or context. These values make your code less readable and harder to maintain. Magic strings can cause confusion to the reader of your tests. If a string looks out of the ordinary, they might wonder why a certain value was chosen for a parameter or return value. This type of string value might lead them to take a closer look at the implementation details, rather than focus on the test.

A stub is a controllable replacement for an existing dependency (or collaborator) in the system. By using a stub, you can test your code without dealing with the dependency directly.

Impostor syndrome affects a variety of individuals. If you suffer from it, you have an ongoing sense that you are not good enough, leading you to see yourself as a fraud. You become anxious about being discovered as a fraud when you are not in fact a fraud! Even with achievements, skills, and proficiency, self-distrust can arise, causing individuals to question their capabilities and attribute their achievements to mere chance, timing, or external influences. Over time they can completely lose faith in their ability to do their jobs.

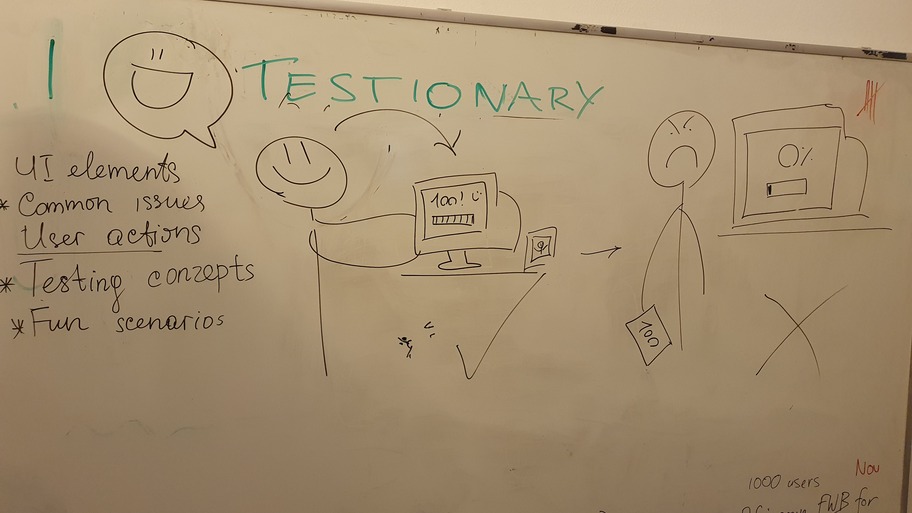

Testionary is a team game based on Pictionary, adapted for software testing vocabulary.

If you've never played Pictionary: it's a party game where one person draws a word or phrase on a whiteboard while their teammates race to guess what it is. The drawer can't talk, write letters, or use numbers. That's it. The fun comes from terrible drawings and wild guesses. Here's a quick overview of the full Pictionary rules.

Testionary keeps that core idea but swaps regular words for software testing terms. One person draws "regression bug" or "Friday deployment." Everyone else shouts guesses.

The game uses 5 categories: UI elements, common issues, user actions, testing concepts, and fun scenarios. You can generate words fast with an LLM or write them on sticky notes during the game.

It works for meetup icebreakers, team building sessions, onboarding new hires, or any moment when your group needs energy and connection. You don't need drawing skills. You don't need testing experience. You just need a whiteboard and a willingness to laugh at yourself.

Couple of definitions floating around at the moment.

The process of analysing an AI-generated output to infer the prompt that could have produced it. Also called prompt reconstruction.

The AI asks you questions first instead of writing the prompt yourself. Also called AI-led prompting.

Crisis Engineering is a field guide and framework for leading through high-stakes, chaotic meltdowns—such as IT system failures or organizational disasters—to transform them into lasting improvements. It involves proactive sensemaking, identifying crisis signals, and using hands-on tools to move from chaos to resilient, strengthened systems.

QA usually owns the automated tests. When a company mandates a level of automation, QA spends a part of their time growing the number and scope of the automated tests. Over time, maintenance of these tests can become expensive, and most of a QA teams time can be spent maintaining the existing automation while adding all new tests into the automation. This becomes a perpetual treadmill of building test automation that can take time and energy away from other valuable QA tasks such as design and specification review, functional testing, exploratory testing, interactive testing, etc. "Now, here, you see, it takes all the running you can do, to keep in the same place" - The Red Queen, Through the Looking Glass

Grey box testing is a method where the tester has partial knowledge of the application's internal structure. It is the middle ground between black box and white box testing. You might have access to the database schema or the API documentation while you test the user interface. This allows you to write better test cases because you understand the underlying logic.It is particularly useful for integration testing where you want to see how data flows between different components. During refinement, you might use your knowledge of the system architecture to identify specific risks. By looking at the acceptance criteria and the technical design, you can ensure that the tests cover both the user journey and data integrity.It helps to find bugs that a pure black box test would miss, such as a record not being updated correctly in the background or an API returning more data than it should. It is a smart way to test because it combines the user perspective with technical insight. You aren't just clicking buttons. You are verifying that the entire system is behaving as it should.

Sad-path testing is a very general term to cover testing the unexpected. It involves verifying how an application behaves when it receives invalid data or encounters an error. It is the direct opposite of happy path testing, which only follows the intended user journey. When you perform sad-path testing, you are checking that the system handles exceptions as required. This often means looking at acceptance criteria to see how the system should respond to incorrect logins. timed-out sessions. or empty fields. It is a critical part of making a product robust and reliable for real users. You are essentially trying to find where the logic breaks down when a user does something unexpected. By identifying these scenarios during refinement, you can ensure the developers build in proper error messages and recovery steps. It helps to move beyond basic functionality and ensures the software can handle the messiness of the real world. As a general term, it can cover many areas, but is a simple way to explain testing if for more than confirming software does what it is supposed to do.

A backlog refinement is when the team gets together to review the work waiting in the queue. It is the time when a user story is reviewed to make sure the requirements are actually understood by everyone. You can spend this time adding acceptance criteria, so there is no confusion about what 'done' looks like. 3 Amigos sessions are similar but a more focused deep dive, with a smaller group which can include product owners or people not directly involved with the team. It can also be when you break down larger requirements into smaller, more manageable user stories or tasks. You are effectively checking that the user story or requirement is solid and good to go. This prevents the team from picking up a ticket in a sprint and then realising they do not know how to start, or will know when they are done.

A Sandbox is an isolated environment used to safely run, test, or experiment with software without affecting live systems, real users, or production data. It provides a controlled space where changes can be made, code can fail, and unexpected behaviour can be explored without risk outside the sandbox. For example, a team building an online checkout feature might use a sandbox version of their application to test new payment logic. In the sandbox, testers can create fake users, place dummy orders, and deliberately trigger errors like failed payments or timeouts without charging real customers or impacting the live website. Once the team is confident the change behaves as expected, it can then be safely promoted to production.

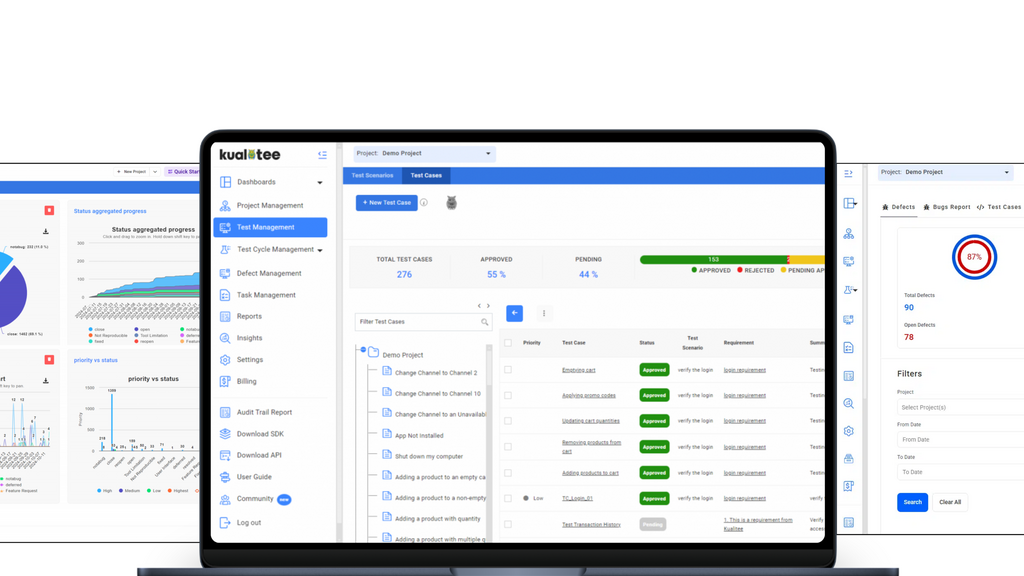

Manage your entire QA lifecycle in one place. Sync Jira, automate scripts, and use AI to accelerate your testing.

With servers in >250 cities around the world, check your site for localization problems, broken GDPR banners, etc.

Jira Issue Connect brings live, real-time Jira data directly into TestRail, eliminating tab-switching and stale fields.

Catch the on-demand session with gaming legend John Romero and see how we’re redefining software quality at AI speed.