Emily O'Connor

Principal Quality Engineer

She/Her

Technical leader with a sixth sense for bugs. Avid learner, passionate about translating "dev-speak" to enable teams adopt automation and AI-accelerated quality engineering. I believe great software starts with user-focused problem solving, and automation should surface the bugs that PMs actually care about fixing.

Achievements

Certificates

Awarded for:

Passing the exam with a score of 88%

Awarded for:

Passing the exam with a score of 95%

Activity

commented on:

"Chapters

Presentation starts at 6:00

Demo of the test case generator 16:45

Part 2: Automation & Self Healing 22:55

Demo of automation 29:30

Part 3: Execution & Reporting 36:00

Demo 38:30

Part 4: Bug Triage 40:30

Questions 47:00"

awarded Imran Ali for:

AI-driven testing in practice: from requirements to reliable automation

earned:

AI-driven testing in practice: from requirements to reliable automation

earned:

San Francisco Depot

contributed:

Definitions of San Francisco Depot

Contributions

San Francisco depot is a mnemonic for the SFDPO software exploratory testing heuristic. SFDPO stands for Structure, Function, Data, Platform and Operations. Each of these represents a different aspect of a software product.StructureStructure is what the product is. This is its physical files, utility programs, physical materials, etc.

FunctionFunction is what the product does. This is like the product's functional requirements. How does it handle errors? What is its UI? How does it interface with the operating system?

DataData is what the product processes. What kinds of input does it process? This can be input from the user, the file system, etc. What kind of output or reports does it generate? Does it come with default data? Is any of its input sensitive to timing or sequencing?

PlatformPlatform is what the product depends upon. What operating systems, browsers, runtime libraries, etc. does it run on? Does the user need to configure the environment? Does it depend on third-party components?

OperationsOperations are scenarios in which the product will be used. Who are the application's users? Where and how will they use it?

Dogfooding refers to software developers and companies using their own products and services, just like their customers do.Imagine a chef who will not taste their dish. It makes you think, doesn’t it? The same idea applies to businesses that do not use their products. Here is the answer to what is dogfooding. It shows how much a company believes in what creates. It helps the development team see and experience the product value directly.Dogfooding, or Eating your own dog food, helps you see what your customers see.

SCA (Software Composition Analysis) tools scan your manifest files (e.g. your package.json) against known vulnerability databases. They're looking for known vulnerabilities in third-party libraries, like malicious npm packages. SCA tools match every package and direct dependency in your project, regardless of whether your code actually uses the vulnerable functions which can create alert fatigue.Your teams may already have SCA tools in the pipeline, since it’s common to refer to them by the tool vendor such as Snyk, Endor Labs, Black Duck, OWASP dependency-check, Grype, GitHub Advanced Security and many others.

Any fool can write code that a computer can understand. Good programmers write code that humans can understand.

Clean code is code that is easy to read, easy to understand and easy to maintain. It is written for humans first and computers second.Here are some characteristics of clean code:

It expresses its intent clearly, meaning that variables, functions, and classes have descriptive names that tell you exactly what they do. Often following a naming and casing standard so that you cannot identify i ndividual authors.

Functions perform a single action.

Code adheres to the DRY principle (Don't Repeat Yourself). If you need to change a business rule, you should only have to change it in one place.

It isn't longer than it needs to be, including comments. It contains no dead code, unused variables, or speculative features (YAGNI - you ain't gonna need it).

It handles errors gracefully such as network failure, missing files or bad user input, and handles those exceptions explicitly, leaving the system in a predictable state.

It has minimal dependencies. Internally, code keeps connections between different parts of the software to a minimum and externally multiple packages aren't brought in for the same action (breaking DRY and increasing security surface area).

It is self-documenting. The code itself explains what it is doing and comments add why an approach was taken or the business logic being implemented, rather than explaining the lines of code they cover.

Similarly to self- documenting, clean code is verifiable. It is written in a way that makes it easy to test and it actually passes those automated tests consistently.

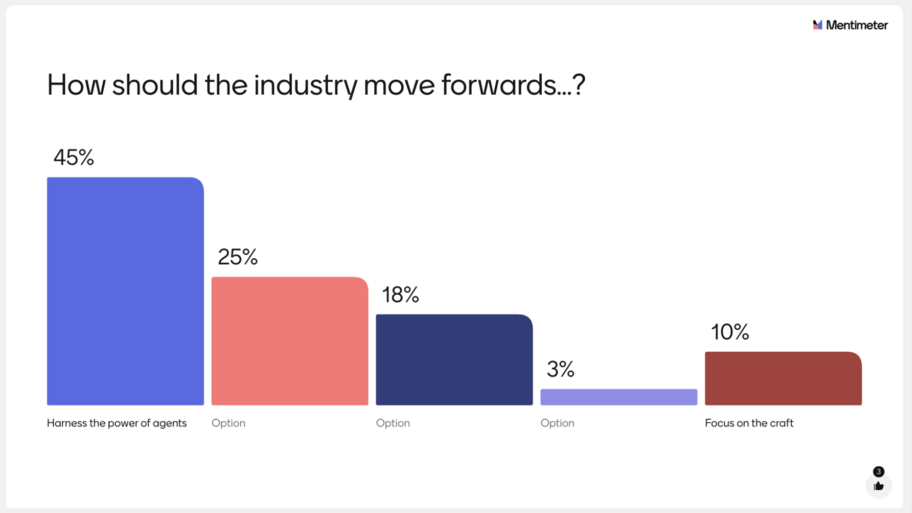

Yesterday, at Leeds Testing Atelier, I presented some slides around the use of AI (as a tool) to enable automation. After defining automation vs test automation and AI (technology that enables comp...

Emily kicks off track one

Magic values are values hard-coded directly in your tests without any code extra comment or context. These values make your code less readable and harder to maintain. Magic strings can cause confusion to the reader of your tests. If a string looks out of the ordinary, they might wonder why a certain value was chosen for a parameter or return value. This type of string value might lead them to take a closer look at the implementation details, rather than focus on the test.

A stub is a controllable replacement for an existing dependency (or collaborator) in the system. By using a stub, you can test your code without dealing with the dependency directly.

Master the RICCE framework to transition from manual testing to AI-augmented automation by leveraging structured prompting and the Playwright MCP agent ecosystem.

Evaluate the shift from "automate all" to "review all" by using AI agents for test plans while applying human-led ACE feedback to ensure code quality and business relevance.

Apply the "5 Whys" technique to both technical debugging and organisational workflows to uncover deep-seated root causes and implement sustainable continuous improvements.