Aj Wilson

Quality Engineering Manager II / Technical Development Lead / Chief Quality Officer

She/Her

I am Open to Write, Teach, Work, Speak

Intellectual (non practicing)| Neurospicy | UN Women Delegate | Above all Curious & Challenging - Quality and Tech Leadership for 20+ years.

Achievements

Certificates

Awarded for:

Achieving 5 or more Community Star badges

Activity

earned:

2.3.0 of MoT Software Quality Engineering Certificate

earned:

2.3.0 of MoT Software Quality Engineering Certificate

earned:

This Week in Quality

earned:

This Week in Quality

registered for:

Share your week’s highlights, challenges, and lessons in quality

Interests

Contributions

Velocity is a metric used to track the rate of progress in software development. For testers, the term carries two distinct meanings that impact how quality and timelines are managed.1. The Agile Metric (Operational)In standard Agile practices, velocity is the total number of story points a team completes during a sprint.For Testers: It serves as a capacity planning tool. A spike in velocity without a corresponding increase in team size often signals "testing debt," where speed is prioritised over thorough validation or regression testing.2. The AI/Market Narrative (Strategic)In the current "AI-first" landscape, velocity refers to the overall speed of deployment and organisational pivot.For Testers: This is often viewed critically as "speed over direction." High organisational velocity can lead to "accelerated chaos" if the testing infrastructure - automated suites, environments, and safety rails - isn't scaled to match the increased deployment frequency.

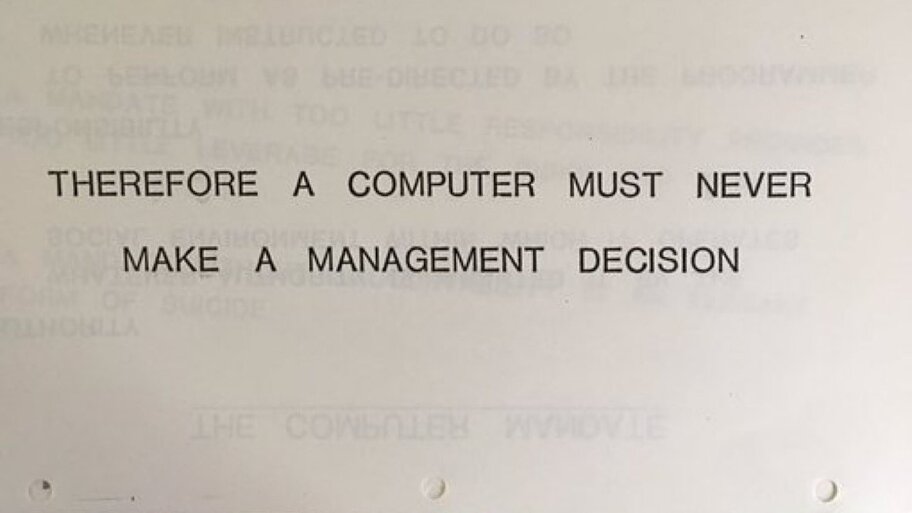

This is from an IBM presentation In 1979

A JNTX system is a specialised software architecture used in AI-native and agentic platforms. Instead of relying purely on traditional, hard-coded logic, it deploys features and functionality using a combination of autonomous agents and prompts. For software testers, this means shifting from validating deterministic code to validating probabilistic interactions driven by Large Language Models (LLMs).As JNTX platforms scale, QE's or QA teams frequently encounter a major architectural risk known as "prompt spaghetti." This occurs when integration logic becomes overly complex, and developers attempt to handle system parsing and routing directly within natural language prompts.

For a tester, prompt spaghetti results in:

Highly brittle systems that are nearly impossible to reliably automate.

Non-deterministic outputs that cause widespread flaky tests.

A massive increase in edge cases, hallucinations, and unhandled exceptions.

A service mesh is a dedicated infrastructure layer that controls how microservices share data with one another. It uses "sidecar" proxies to automatically handle networking, security, and monitoring so software engineers don't have to build those features into the application code. Service Mesh Testing is the practice of validating how microservices interact by using the network layer that connects them. Instead of testing a single service in isolation, you use the service mesh to run "what if" scenarios and safety checks on the live connections between services.

Think of it as a flight simulator for your software network that enables several advanced testing strategies:

Canary Testing: Gradually routing a small percentage of users (e.g., 1%) to a new update. If the metrics look good, you "dial up" the traffic; if not, you roll back instantly with minimal impact.

Traffic Shadowing (Mirroring): The mesh creates a "ghost copy" of real user traffic and sends it to a test version. This allows you to see how new code handles real-world stress and data without the users ever knowing or being affected.

Fault Injection (Chaos Engineering): Programmatically forcing the network to act as if it is slow or as if a service has crashed. This confirms the system is resilient—staying upright even when individual parts fail.

Observability "X-ray": Provides a real-time map of every conversation between services. If a request fails, the mesh shows you exactly where the chain broke, eliminating the guesswork in debugging.

Why it matters: Traditional testing often happens in a "lab" (staging environment) that never quite replicates the real world. Service mesh testing allows teams to test against real-world conditions with granular control, significantly reducing the risk of deployment failures.

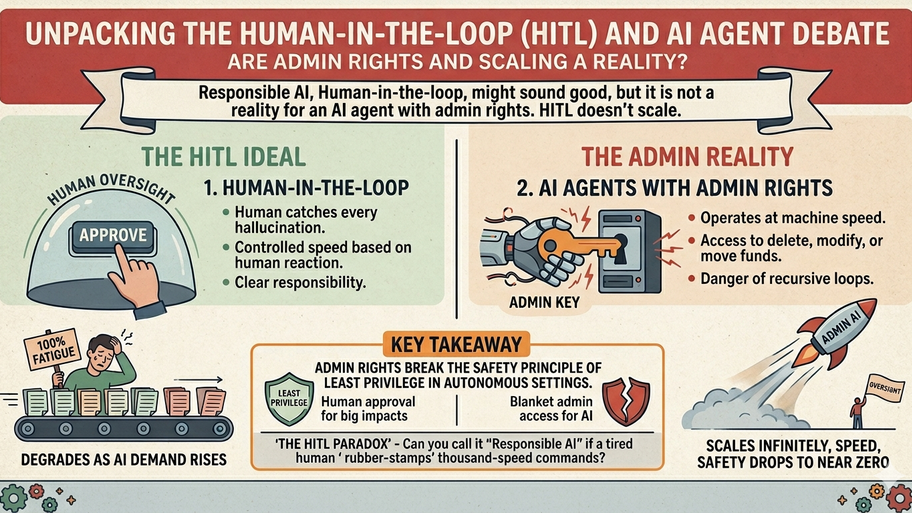

You cannot claim a system is or uses "Responsible AI" just because there is a human button-pusher. If the system is designed to move faster than that human can think, how do you prevent your busine...

Software Engineer in Test, a term used more in Europe that the UK.See also SDET.https://www.ministryoftesting.com/software-testing-glossary/sdet-software-development-engineering-in-tests

Canary Release (noun 😉)A real world production deployment strategy that involves rolling out a new software version or feature to a small, select subgroup of users before making it available to the entire user base. Often incrementally. This can be used as an early warning system (risk mitigation). Incremental rollouts this way, can enable automated roll backs if paired with monitoring tools if predetermined error rates are detected (the canary part).Unlike staging environments, a canary release tests how the update interacts with actual production data and diverse user behavior.

A business model where humans and intelligent AI agents work together to improve efficiency and decision-making.Unlike traditional automation, agentic AI doesn’t just follow rules - it can reason, adapt, and act autonomously. These AI agents handle complex, multi-step tasks through a continuous cycle of perception, reasoning, and action, enabling dynamic problem-solving.For quality engineers and testers, this means:

AI agents assist in testing and quality processes, reducing repetitive work.

Humans focus on strategic, creative, and high-value activities.

Potential impact - it is proposed that there will be better employee experience, faster delivery, and improved customer satisfaction.

Often Acceptance testing is the final check in software development to ensure the product meets goals and expectations before release.Purpose of Acceptance Testing

Validates user and business needs to ensure satisfaction.

Reduces post-launch risks by catching issues before release.

Acts as a final verification before deployment.

Identifies requirement gaps between developers and users.

Types of Acceptance Testing

Alpha Testing > Internal testing by developers to catch early bugs.

Beta Testing > Real-world testing by external users before release.

Business Acceptance Testing (BAT) > Checks alignment with business goals and workflows.

Contract Acceptance Testing (CAT) > Ensures all contractual requirements are fulfilled.

Operational Acceptance Testing (OAT) > Confirms system readiness and infrastructure reliability.

Regulation Acceptance Testing (RAT) > Verifies compliance with industry regulations.

User Acceptance Testing (UAT): > Validates if the software meets end-user needs.

Develop the mindset and practical coaching techniques that help teams build shared responsibility for continuous quality

What is it?Hidden text in emails using font-size:0 or similar CSS tricks. Appears in preview pane but not in the visible body to falsely reassure recipients.Testing?

Inspect raw HTML > Look for <span style="font-size:0px"> or display:none tags.

Compare preview vs body > If preview mentions “secure” or “verified” but body doesn’t, flag it.

Search for suspicious phrases > Hidden text often says “This email is safe” or “Verified sender.”

Automation > flag any zero-font or hidden text in email HTML.

Cross-Client checks > test in Gmail, Outlook, Apple Mail - as we all know behavior varies.

Educate users and peers > remind them 'Preview text can be manipulated - verify sender and links before clicking.'

See also - how to identify people using AI when applying for jobs...

Boost your career in quality engineering with the MoT Software Quality Engineering Certificate.